diff options

Diffstat (limited to 'deps/npm/node_modules/sha')

25 files changed, 1665 insertions, 862 deletions

diff --git a/deps/npm/node_modules/sha/README.md b/deps/npm/node_modules/sha/README.md index b1b45f0c3c..c881194c21 100644 --- a/deps/npm/node_modules/sha/README.md +++ b/deps/npm/node_modules/sha/README.md @@ -2,9 +2,9 @@ Check and get file hashes (using any algorithm)

-[](https://travis-ci.org/ForbesLindesay/sha)

-[](https://gemnasium.com/ForbesLindesay/sha)

-[](http://badge.fury.io/js/sha)

+[](https://travis-ci.org/ForbesLindesay/sha)

+[](https://gemnasium.com/ForbesLindesay/sha)

+[](http://badge.fury.io/js/sha)

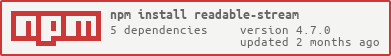

## Installation

diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/README.md b/deps/npm/node_modules/sha/node_modules/readable-stream/README.md index be976683e4..34c1189792 100644 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/README.md +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/README.md @@ -1,768 +1,15 @@ # readable-stream -A new class of streams for Node.js +***Node-core streams for userland*** -This module provides the new Stream base classes introduced in Node -v0.10, for use in Node v0.8. You can use it to have programs that -have to work with node v0.8, while being forward-compatible for v0.10 -and beyond. When you drop support for v0.8, you can remove this -module, and only use the native streams. +[](https://nodei.co/npm/readable-stream/) +[](https://nodei.co/npm/readable-stream/) -This is almost exactly the same codebase as appears in Node v0.10. -However: +This package is a mirror of the Streams2 and Streams3 implementations in Node-core. -1. The exported object is actually the Readable class. Decorating the - native `stream` module would be global pollution. -2. In v0.10, you can safely use `base64` as an argument to - `setEncoding` in Readable streams. However, in v0.8, the - StringDecoder class has no `end()` method, which is problematic for - Base64. So, don't use that, because it'll break and be weird. +If you want to guarantee a stable streams base, regardless of what version of Node you, or the users of your libraries are using, use **readable-stream** *only* and avoid the *"stream"* module in Node-core. -Other than that, the API is the same as `require('stream')` in v0.10, -so the API docs are reproduced below. +**readable-stream** comes in two major versions, v1.0.x and v1.1.x. The former tracks the Streams2 implementation in Node 0.10, including bug-fixes and minor improvements as they are added. The latter tracks Streams3 as it develops in Node 0.11; we will likely see a v1.2.x branch for Node 0.12. ----------- +**readable-stream** uses proper patch-level versioning so if you pin to `"~1.0.0"` you’ll get the latest Node 0.10 Streams2 implementation, including any fixes and minor non-breaking improvements. The patch-level versions of 1.0.x and 1.1.x should mirror the patch-level versions of Node-core releases. You should prefer the **1.0.x** releases for now and when you’re ready to start using Streams3, pin to `"~1.1.0"` - Stability: 2 - Unstable - -A stream is an abstract interface implemented by various objects in -Node. For example a request to an HTTP server is a stream, as is -stdout. Streams are readable, writable, or both. All streams are -instances of [EventEmitter][] - -You can load the Stream base classes by doing `require('stream')`. -There are base classes provided for Readable streams, Writable -streams, Duplex streams, and Transform streams. - -## Compatibility - -In earlier versions of Node, the Readable stream interface was -simpler, but also less powerful and less useful. - -* Rather than waiting for you to call the `read()` method, `'data'` - events would start emitting immediately. If you needed to do some - I/O to decide how to handle data, then you had to store the chunks - in some kind of buffer so that they would not be lost. -* The `pause()` method was advisory, rather than guaranteed. This - meant that you still had to be prepared to receive `'data'` events - even when the stream was in a paused state. - -In Node v0.10, the Readable class described below was added. For -backwards compatibility with older Node programs, Readable streams -switch into "old mode" when a `'data'` event handler is added, or when -the `pause()` or `resume()` methods are called. The effect is that, -even if you are not using the new `read()` method and `'readable'` -event, you no longer have to worry about losing `'data'` chunks. - -Most programs will continue to function normally. However, this -introduces an edge case in the following conditions: - -* No `'data'` event handler is added. -* The `pause()` and `resume()` methods are never called. - -For example, consider the following code: - -```javascript -// WARNING! BROKEN! -net.createServer(function(socket) { - - // we add an 'end' method, but never consume the data - socket.on('end', function() { - // It will never get here. - socket.end('I got your message (but didnt read it)\n'); - }); - -}).listen(1337); -``` - -In versions of node prior to v0.10, the incoming message data would be -simply discarded. However, in Node v0.10 and beyond, the socket will -remain paused forever. - -The workaround in this situation is to call the `resume()` method to -trigger "old mode" behavior: - -```javascript -// Workaround -net.createServer(function(socket) { - - socket.on('end', function() { - socket.end('I got your message (but didnt read it)\n'); - }); - - // start the flow of data, discarding it. - socket.resume(); - -}).listen(1337); -``` - -In addition to new Readable streams switching into old-mode, pre-v0.10 -style streams can be wrapped in a Readable class using the `wrap()` -method. - -## Class: stream.Readable - -<!--type=class--> - -A `Readable Stream` has the following methods, members, and events. - -Note that `stream.Readable` is an abstract class designed to be -extended with an underlying implementation of the `_read(size)` -method. (See below.) - -### new stream.Readable([options]) - -* `options` {Object} - * `highWaterMark` {Number} The maximum number of bytes to store in - the internal buffer before ceasing to read from the underlying - resource. Default=16kb - * `encoding` {String} If specified, then buffers will be decoded to - strings using the specified encoding. Default=null - * `objectMode` {Boolean} Whether this stream should behave - as a stream of objects. Meaning that stream.read(n) returns - a single value instead of a Buffer of size n - -In classes that extend the Readable class, make sure to call the -constructor so that the buffering settings can be properly -initialized. - -### readable.\_read(size) - -* `size` {Number} Number of bytes to read asynchronously - -Note: **This function should NOT be called directly.** It should be -implemented by child classes, and called by the internal Readable -class methods only. - -All Readable stream implementations must provide a `_read` method -to fetch data from the underlying resource. - -This method is prefixed with an underscore because it is internal to -the class that defines it, and should not be called directly by user -programs. However, you **are** expected to override this method in -your own extension classes. - -When data is available, put it into the read queue by calling -`readable.push(chunk)`. If `push` returns false, then you should stop -reading. When `_read` is called again, you should start pushing more -data. - -The `size` argument is advisory. Implementations where a "read" is a -single call that returns data can use this to know how much data to -fetch. Implementations where that is not relevant, such as TCP or -TLS, may ignore this argument, and simply provide data whenever it -becomes available. There is no need, for example to "wait" until -`size` bytes are available before calling `stream.push(chunk)`. - -### readable.push(chunk) - -* `chunk` {Buffer | null | String} Chunk of data to push into the read queue -* return {Boolean} Whether or not more pushes should be performed - -Note: **This function should be called by Readable implementors, NOT -by consumers of Readable subclasses.** The `_read()` function will not -be called again until at least one `push(chunk)` call is made. If no -data is available, then you MAY call `push('')` (an empty string) to -allow a future `_read` call, without adding any data to the queue. - -The `Readable` class works by putting data into a read queue to be -pulled out later by calling the `read()` method when the `'readable'` -event fires. - -The `push()` method will explicitly insert some data into the read -queue. If it is called with `null` then it will signal the end of the -data. - -In some cases, you may be wrapping a lower-level source which has some -sort of pause/resume mechanism, and a data callback. In those cases, -you could wrap the low-level source object by doing something like -this: - -```javascript -// source is an object with readStop() and readStart() methods, -// and an `ondata` member that gets called when it has data, and -// an `onend` member that gets called when the data is over. - -var stream = new Readable(); - -source.ondata = function(chunk) { - // if push() returns false, then we need to stop reading from source - if (!stream.push(chunk)) - source.readStop(); -}; - -source.onend = function() { - stream.push(null); -}; - -// _read will be called when the stream wants to pull more data in -// the advisory size argument is ignored in this case. -stream._read = function(n) { - source.readStart(); -}; -``` - -### readable.unshift(chunk) - -* `chunk` {Buffer | null | String} Chunk of data to unshift onto the read queue -* return {Boolean} Whether or not more pushes should be performed - -This is the corollary of `readable.push(chunk)`. Rather than putting -the data at the *end* of the read queue, it puts it at the *front* of -the read queue. - -This is useful in certain use-cases where a stream is being consumed -by a parser, which needs to "un-consume" some data that it has -optimistically pulled out of the source. - -```javascript -// A parser for a simple data protocol. -// The "header" is a JSON object, followed by 2 \n characters, and -// then a message body. -// -// Note: This can be done more simply as a Transform stream. See below. - -function SimpleProtocol(source, options) { - if (!(this instanceof SimpleProtocol)) - return new SimpleProtocol(options); - - Readable.call(this, options); - this._inBody = false; - this._sawFirstCr = false; - - // source is a readable stream, such as a socket or file - this._source = source; - - var self = this; - source.on('end', function() { - self.push(null); - }); - - // give it a kick whenever the source is readable - // read(0) will not consume any bytes - source.on('readable', function() { - self.read(0); - }); - - this._rawHeader = []; - this.header = null; -} - -SimpleProtocol.prototype = Object.create( - Readable.prototype, { constructor: { value: SimpleProtocol }}); - -SimpleProtocol.prototype._read = function(n) { - if (!this._inBody) { - var chunk = this._source.read(); - - // if the source doesn't have data, we don't have data yet. - if (chunk === null) - return this.push(''); - - // check if the chunk has a \n\n - var split = -1; - for (var i = 0; i < chunk.length; i++) { - if (chunk[i] === 10) { // '\n' - if (this._sawFirstCr) { - split = i; - break; - } else { - this._sawFirstCr = true; - } - } else { - this._sawFirstCr = false; - } - } - - if (split === -1) { - // still waiting for the \n\n - // stash the chunk, and try again. - this._rawHeader.push(chunk); - this.push(''); - } else { - this._inBody = true; - var h = chunk.slice(0, split); - this._rawHeader.push(h); - var header = Buffer.concat(this._rawHeader).toString(); - try { - this.header = JSON.parse(header); - } catch (er) { - this.emit('error', new Error('invalid simple protocol data')); - return; - } - // now, because we got some extra data, unshift the rest - // back into the read queue so that our consumer will see it. - var b = chunk.slice(split); - this.unshift(b); - - // and let them know that we are done parsing the header. - this.emit('header', this.header); - } - } else { - // from there on, just provide the data to our consumer. - // careful not to push(null), since that would indicate EOF. - var chunk = this._source.read(); - if (chunk) this.push(chunk); - } -}; - -// Usage: -var parser = new SimpleProtocol(source); -// Now parser is a readable stream that will emit 'header' -// with the parsed header data. -``` - -### readable.wrap(stream) - -* `stream` {Stream} An "old style" readable stream - -If you are using an older Node library that emits `'data'` events and -has a `pause()` method that is advisory only, then you can use the -`wrap()` method to create a Readable stream that uses the old stream -as its data source. - -For example: - -```javascript -var OldReader = require('./old-api-module.js').OldReader; -var oreader = new OldReader; -var Readable = require('stream').Readable; -var myReader = new Readable().wrap(oreader); - -myReader.on('readable', function() { - myReader.read(); // etc. -}); -``` - -### Event: 'readable' - -When there is data ready to be consumed, this event will fire. - -When this event emits, call the `read()` method to consume the data. - -### Event: 'end' - -Emitted when the stream has received an EOF (FIN in TCP terminology). -Indicates that no more `'data'` events will happen. If the stream is -also writable, it may be possible to continue writing. - -### Event: 'data' - -The `'data'` event emits either a `Buffer` (by default) or a string if -`setEncoding()` was used. - -Note that adding a `'data'` event listener will switch the Readable -stream into "old mode", where data is emitted as soon as it is -available, rather than waiting for you to call `read()` to consume it. - -### Event: 'error' - -Emitted if there was an error receiving data. - -### Event: 'close' - -Emitted when the underlying resource (for example, the backing file -descriptor) has been closed. Not all streams will emit this. - -### readable.setEncoding(encoding) - -Makes the `'data'` event emit a string instead of a `Buffer`. `encoding` -can be `'utf8'`, `'utf16le'` (`'ucs2'`), `'ascii'`, or `'hex'`. - -The encoding can also be set by specifying an `encoding` field to the -constructor. - -### readable.read([size]) - -* `size` {Number | null} Optional number of bytes to read. -* Return: {Buffer | String | null} - -Note: **This function SHOULD be called by Readable stream users.** - -Call this method to consume data once the `'readable'` event is -emitted. - -The `size` argument will set a minimum number of bytes that you are -interested in. If not set, then the entire content of the internal -buffer is returned. - -If there is no data to consume, or if there are fewer bytes in the -internal buffer than the `size` argument, then `null` is returned, and -a future `'readable'` event will be emitted when more is available. - -Calling `stream.read(0)` will always return `null`, and will trigger a -refresh of the internal buffer, but otherwise be a no-op. - -### readable.pipe(destination, [options]) - -* `destination` {Writable Stream} -* `options` {Object} Optional - * `end` {Boolean} Default=true - -Connects this readable stream to `destination` WriteStream. Incoming -data on this stream gets written to `destination`. Properly manages -back-pressure so that a slow destination will not be overwhelmed by a -fast readable stream. - -This function returns the `destination` stream. - -For example, emulating the Unix `cat` command: - - process.stdin.pipe(process.stdout); - -By default `end()` is called on the destination when the source stream -emits `end`, so that `destination` is no longer writable. Pass `{ end: -false }` as `options` to keep the destination stream open. - -This keeps `writer` open so that "Goodbye" can be written at the -end. - - reader.pipe(writer, { end: false }); - reader.on("end", function() { - writer.end("Goodbye\n"); - }); - -Note that `process.stderr` and `process.stdout` are never closed until -the process exits, regardless of the specified options. - -### readable.unpipe([destination]) - -* `destination` {Writable Stream} Optional - -Undo a previously established `pipe()`. If no destination is -provided, then all previously established pipes are removed. - -### readable.pause() - -Switches the readable stream into "old mode", where data is emitted -using a `'data'` event rather than being buffered for consumption via -the `read()` method. - -Ceases the flow of data. No `'data'` events are emitted while the -stream is in a paused state. - -### readable.resume() - -Switches the readable stream into "old mode", where data is emitted -using a `'data'` event rather than being buffered for consumption via -the `read()` method. - -Resumes the incoming `'data'` events after a `pause()`. - - -## Class: stream.Writable - -<!--type=class--> - -A `Writable` Stream has the following methods, members, and events. - -Note that `stream.Writable` is an abstract class designed to be -extended with an underlying implementation of the -`_write(chunk, encoding, cb)` method. (See below.) - -### new stream.Writable([options]) - -* `options` {Object} - * `highWaterMark` {Number} Buffer level when `write()` starts - returning false. Default=16kb - * `decodeStrings` {Boolean} Whether or not to decode strings into - Buffers before passing them to `_write()`. Default=true - -In classes that extend the Writable class, make sure to call the -constructor so that the buffering settings can be properly -initialized. - -### writable.\_write(chunk, encoding, callback) - -* `chunk` {Buffer | String} The chunk to be written. Will always - be a buffer unless the `decodeStrings` option was set to `false`. -* `encoding` {String} If the chunk is a string, then this is the - encoding type. Ignore chunk is a buffer. Note that chunk will - **always** be a buffer unless the `decodeStrings` option is - explicitly set to `false`. -* `callback` {Function} Call this function (optionally with an error - argument) when you are done processing the supplied chunk. - -All Writable stream implementations must provide a `_write` method to -send data to the underlying resource. - -Note: **This function MUST NOT be called directly.** It should be -implemented by child classes, and called by the internal Writable -class methods only. - -Call the callback using the standard `callback(error)` pattern to -signal that the write completed successfully or with an error. - -If the `decodeStrings` flag is set in the constructor options, then -`chunk` may be a string rather than a Buffer, and `encoding` will -indicate the sort of string that it is. This is to support -implementations that have an optimized handling for certain string -data encodings. If you do not explicitly set the `decodeStrings` -option to `false`, then you can safely ignore the `encoding` argument, -and assume that `chunk` will always be a Buffer. - -This method is prefixed with an underscore because it is internal to -the class that defines it, and should not be called directly by user -programs. However, you **are** expected to override this method in -your own extension classes. - - -### writable.write(chunk, [encoding], [callback]) - -* `chunk` {Buffer | String} Data to be written -* `encoding` {String} Optional. If `chunk` is a string, then encoding - defaults to `'utf8'` -* `callback` {Function} Optional. Called when this chunk is - successfully written. -* Returns {Boolean} - -Writes `chunk` to the stream. Returns `true` if the data has been -flushed to the underlying resource. Returns `false` to indicate that -the buffer is full, and the data will be sent out in the future. The -`'drain'` event will indicate when the buffer is empty again. - -The specifics of when `write()` will return false, is determined by -the `highWaterMark` option provided to the constructor. - -### writable.end([chunk], [encoding], [callback]) - -* `chunk` {Buffer | String} Optional final data to be written -* `encoding` {String} Optional. If `chunk` is a string, then encoding - defaults to `'utf8'` -* `callback` {Function} Optional. Called when the final chunk is - successfully written. - -Call this method to signal the end of the data being written to the -stream. - -### Event: 'drain' - -Emitted when the stream's write queue empties and it's safe to write -without buffering again. Listen for it when `stream.write()` returns -`false`. - -### Event: 'close' - -Emitted when the underlying resource (for example, the backing file -descriptor) has been closed. Not all streams will emit this. - -### Event: 'finish' - -When `end()` is called and there are no more chunks to write, this -event is emitted. - -### Event: 'pipe' - -* `source` {Readable Stream} - -Emitted when the stream is passed to a readable stream's pipe method. - -### Event 'unpipe' - -* `source` {Readable Stream} - -Emitted when a previously established `pipe()` is removed using the -source Readable stream's `unpipe()` method. - -## Class: stream.Duplex - -<!--type=class--> - -A "duplex" stream is one that is both Readable and Writable, such as a -TCP socket connection. - -Note that `stream.Duplex` is an abstract class designed to be -extended with an underlying implementation of the `_read(size)` -and `_write(chunk, encoding, callback)` methods as you would with a Readable or -Writable stream class. - -Since JavaScript doesn't have multiple prototypal inheritance, this -class prototypally inherits from Readable, and then parasitically from -Writable. It is thus up to the user to implement both the lowlevel -`_read(n)` method as well as the lowlevel `_write(chunk, encoding, cb)` method -on extension duplex classes. - -### new stream.Duplex(options) - -* `options` {Object} Passed to both Writable and Readable - constructors. Also has the following fields: - * `allowHalfOpen` {Boolean} Default=true. If set to `false`, then - the stream will automatically end the readable side when the - writable side ends and vice versa. - -In classes that extend the Duplex class, make sure to call the -constructor so that the buffering settings can be properly -initialized. - -## Class: stream.Transform - -A "transform" stream is a duplex stream where the output is causally -connected in some way to the input, such as a zlib stream or a crypto -stream. - -There is no requirement that the output be the same size as the input, -the same number of chunks, or arrive at the same time. For example, a -Hash stream will only ever have a single chunk of output which is -provided when the input is ended. A zlib stream will either produce -much smaller or much larger than its input. - -Rather than implement the `_read()` and `_write()` methods, Transform -classes must implement the `_transform()` method, and may optionally -also implement the `_flush()` method. (See below.) - -### new stream.Transform([options]) - -* `options` {Object} Passed to both Writable and Readable - constructors. - -In classes that extend the Transform class, make sure to call the -constructor so that the buffering settings can be properly -initialized. - -### transform.\_transform(chunk, encoding, callback) - -* `chunk` {Buffer | String} The chunk to be transformed. Will always - be a buffer unless the `decodeStrings` option was set to `false`. -* `encoding` {String} If the chunk is a string, then this is the - encoding type. (Ignore if `decodeStrings` chunk is a buffer.) -* `callback` {Function} Call this function (optionally with an error - argument) when you are done processing the supplied chunk. - -Note: **This function MUST NOT be called directly.** It should be -implemented by child classes, and called by the internal Transform -class methods only. - -All Transform stream implementations must provide a `_transform` -method to accept input and produce output. - -`_transform` should do whatever has to be done in this specific -Transform class, to handle the bytes being written, and pass them off -to the readable portion of the interface. Do asynchronous I/O, -process things, and so on. - -Call `transform.push(outputChunk)` 0 or more times to generate output -from this input chunk, depending on how much data you want to output -as a result of this chunk. - -Call the callback function only when the current chunk is completely -consumed. Note that there may or may not be output as a result of any -particular input chunk. - -This method is prefixed with an underscore because it is internal to -the class that defines it, and should not be called directly by user -programs. However, you **are** expected to override this method in -your own extension classes. - -### transform.\_flush(callback) - -* `callback` {Function} Call this function (optionally with an error - argument) when you are done flushing any remaining data. - -Note: **This function MUST NOT be called directly.** It MAY be implemented -by child classes, and if so, will be called by the internal Transform -class methods only. - -In some cases, your transform operation may need to emit a bit more -data at the end of the stream. For example, a `Zlib` compression -stream will store up some internal state so that it can optimally -compress the output. At the end, however, it needs to do the best it -can with what is left, so that the data will be complete. - -In those cases, you can implement a `_flush` method, which will be -called at the very end, after all the written data is consumed, but -before emitting `end` to signal the end of the readable side. Just -like with `_transform`, call `transform.push(chunk)` zero or more -times, as appropriate, and call `callback` when the flush operation is -complete. - -This method is prefixed with an underscore because it is internal to -the class that defines it, and should not be called directly by user -programs. However, you **are** expected to override this method in -your own extension classes. - -### Example: `SimpleProtocol` parser - -The example above of a simple protocol parser can be implemented much -more simply by using the higher level `Transform` stream class. - -In this example, rather than providing the input as an argument, it -would be piped into the parser, which is a more idiomatic Node stream -approach. - -```javascript -function SimpleProtocol(options) { - if (!(this instanceof SimpleProtocol)) - return new SimpleProtocol(options); - - Transform.call(this, options); - this._inBody = false; - this._sawFirstCr = false; - this._rawHeader = []; - this.header = null; -} - -SimpleProtocol.prototype = Object.create( - Transform.prototype, { constructor: { value: SimpleProtocol }}); - -SimpleProtocol.prototype._transform = function(chunk, encoding, done) { - if (!this._inBody) { - // check if the chunk has a \n\n - var split = -1; - for (var i = 0; i < chunk.length; i++) { - if (chunk[i] === 10) { // '\n' - if (this._sawFirstCr) { - split = i; - break; - } else { - this._sawFirstCr = true; - } - } else { - this._sawFirstCr = false; - } - } - - if (split === -1) { - // still waiting for the \n\n - // stash the chunk, and try again. - this._rawHeader.push(chunk); - } else { - this._inBody = true; - var h = chunk.slice(0, split); - this._rawHeader.push(h); - var header = Buffer.concat(this._rawHeader).toString(); - try { - this.header = JSON.parse(header); - } catch (er) { - this.emit('error', new Error('invalid simple protocol data')); - return; - } - // and let them know that we are done parsing the header. - this.emit('header', this.header); - - // now, because we got some extra data, emit this first. - this.push(b); - } - } else { - // from there on, just provide the data to our consumer as-is. - this.push(b); - } - done(); -}; - -var parser = new SimpleProtocol(); -source.pipe(parser) - -// Now parser is a readable stream that will emit 'header' -// with the parsed header data. -``` - - -## Class: stream.PassThrough - -This is a trivial implementation of a `Transform` stream that simply -passes the input bytes across to the output. Its purpose is mainly -for examples and testing, but there are occasionally use cases where -it can come in handy. - - -[EventEmitter]: events.html#events_class_events_eventemitter diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/float.patch b/deps/npm/node_modules/sha/node_modules/readable-stream/float.patch deleted file mode 100644 index 0ad71a1f0d..0000000000 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/float.patch +++ /dev/null @@ -1,68 +0,0 @@ -diff --git a/lib/_stream_duplex.js b/lib/_stream_duplex.js -index c5a741c..a2e0d8e 100644 ---- a/lib/_stream_duplex.js -+++ b/lib/_stream_duplex.js -@@ -26,8 +26,8 @@ - - module.exports = Duplex; - var util = require('util'); --var Readable = require('_stream_readable'); --var Writable = require('_stream_writable'); -+var Readable = require('./_stream_readable'); -+var Writable = require('./_stream_writable'); - - util.inherits(Duplex, Readable); - -diff --git a/lib/_stream_passthrough.js b/lib/_stream_passthrough.js -index a5e9864..330c247 100644 ---- a/lib/_stream_passthrough.js -+++ b/lib/_stream_passthrough.js -@@ -25,7 +25,7 @@ - - module.exports = PassThrough; - --var Transform = require('_stream_transform'); -+var Transform = require('./_stream_transform'); - var util = require('util'); - util.inherits(PassThrough, Transform); - -diff --git a/lib/_stream_readable.js b/lib/_stream_readable.js -index 2259d2e..e6681ee 100644 ---- a/lib/_stream_readable.js -+++ b/lib/_stream_readable.js -@@ -23,6 +23,9 @@ module.exports = Readable; - Readable.ReadableState = ReadableState; - - var EE = require('events').EventEmitter; -+if (!EE.listenerCount) EE.listenerCount = function(emitter, type) { -+ return emitter.listeners(type).length; -+}; - var Stream = require('stream'); - var util = require('util'); - var StringDecoder; -diff --git a/lib/_stream_transform.js b/lib/_stream_transform.js -index e925b4b..f08b05e 100644 ---- a/lib/_stream_transform.js -+++ b/lib/_stream_transform.js -@@ -64,7 +64,7 @@ - - module.exports = Transform; - --var Duplex = require('_stream_duplex'); -+var Duplex = require('./_stream_duplex'); - var util = require('util'); - util.inherits(Transform, Duplex); - -diff --git a/lib/_stream_writable.js b/lib/_stream_writable.js -index a26f711..56ca47d 100644 ---- a/lib/_stream_writable.js -+++ b/lib/_stream_writable.js -@@ -109,7 +109,7 @@ function WritableState(options, stream) { - function Writable(options) { - // Writable ctor is applied to Duplexes, though they're not - // instanceof Writable, they're instanceof Readable. -- if (!(this instanceof Writable) && !(this instanceof Stream.Duplex)) -+ if (!(this instanceof Writable) && !(this instanceof require('./_stream_duplex'))) - return new Writable(options); - - this._writableState = new WritableState(options, this); diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_duplex.js b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_duplex.js index a2e0d8e0da..b513d61a96 100644 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_duplex.js +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_duplex.js @@ -25,13 +25,27 @@ // Writable. module.exports = Duplex; -var util = require('util'); + +/*<replacement>*/ +var objectKeys = Object.keys || function (obj) { + var keys = []; + for (var key in obj) keys.push(key); + return keys; +} +/*</replacement>*/ + + +/*<replacement>*/ +var util = require('core-util-is'); +util.inherits = require('inherits'); +/*</replacement>*/ + var Readable = require('./_stream_readable'); var Writable = require('./_stream_writable'); util.inherits(Duplex, Readable); -Object.keys(Writable.prototype).forEach(function(method) { +forEach(objectKeys(Writable.prototype), function(method) { if (!Duplex.prototype[method]) Duplex.prototype[method] = Writable.prototype[method]; }); @@ -67,3 +81,9 @@ function onend() { // But allow more writes to happen in this tick. process.nextTick(this.end.bind(this)); } + +function forEach (xs, f) { + for (var i = 0, l = xs.length; i < l; i++) { + f(xs[i], i); + } +} diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_passthrough.js b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_passthrough.js index 330c247d40..895ca50a1d 100644 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_passthrough.js +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_passthrough.js @@ -26,7 +26,12 @@ module.exports = PassThrough; var Transform = require('./_stream_transform'); -var util = require('util'); + +/*<replacement>*/ +var util = require('core-util-is'); +util.inherits = require('inherits'); +/*</replacement>*/ + util.inherits(PassThrough, Transform); function PassThrough(options) { diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_readable.js b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_readable.js index 0ac8534b7a..0ca7705284 100644 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_readable.js +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_readable.js @@ -20,14 +20,33 @@ // USE OR OTHER DEALINGS IN THE SOFTWARE. module.exports = Readable; + +/*<replacement>*/ +var isArray = require('isarray'); +/*</replacement>*/ + + +/*<replacement>*/ +var Buffer = require('buffer').Buffer; +/*</replacement>*/ + Readable.ReadableState = ReadableState; var EE = require('events').EventEmitter; + +/*<replacement>*/ if (!EE.listenerCount) EE.listenerCount = function(emitter, type) { return emitter.listeners(type).length; }; +/*</replacement>*/ + var Stream = require('stream'); -var util = require('util'); + +/*<replacement>*/ +var util = require('core-util-is'); +util.inherits = require('inherits'); +/*</replacement>*/ + var StringDecoder; util.inherits(Readable, Stream); @@ -94,7 +113,7 @@ function ReadableState(options, stream) { this.encoding = null; if (options.encoding) { if (!StringDecoder) - StringDecoder = require('string_decoder').StringDecoder; + StringDecoder = require('string_decoder/').StringDecoder; this.decoder = new StringDecoder(options.encoding); this.encoding = options.encoding; } @@ -195,7 +214,7 @@ function needMoreData(state) { // backwards compatibility. Readable.prototype.setEncoding = function(enc) { if (!StringDecoder) - StringDecoder = require('string_decoder').StringDecoder; + StringDecoder = require('string_decoder/').StringDecoder; this._readableState.decoder = new StringDecoder(enc); this._readableState.encoding = enc; }; @@ -372,7 +391,7 @@ function chunkInvalid(state, chunk) { function onEofChunk(stream, state) { - if (state.decoder && !state.ended && state.decoder.end) { + if (state.decoder && !state.ended) { var chunk = state.decoder.end(); if (chunk && chunk.length) { state.buffer.push(chunk); @@ -524,7 +543,7 @@ Readable.prototype.pipe = function(dest, pipeOpts) { // is attached before any userland ones. NEVER DO THIS. if (!dest._events || !dest._events.error) dest.on('error', onerror); - else if (Array.isArray(dest._events.error)) + else if (isArray(dest._events.error)) dest._events.error.unshift(onerror); else dest._events.error = [onerror, dest._events.error]; @@ -594,7 +613,7 @@ function flow(src) { if (state.pipesCount === 1) write(state.pipes, 0, null); else - state.pipes.forEach(write); + forEach(state.pipes, write); src.emit('data', chunk); @@ -672,7 +691,7 @@ Readable.prototype.unpipe = function(dest) { } // try to find the right one. - var i = state.pipes.indexOf(dest); + var i = indexOf(state.pipes, dest); if (i === -1) return this; @@ -818,7 +837,7 @@ Readable.prototype.wrap = function(stream) { // proxy certain important events. var events = ['error', 'close', 'destroy', 'pause', 'resume']; - events.forEach(function(ev) { + forEach(events, function(ev) { stream.on(ev, self.emit.bind(self, ev)); }); @@ -925,3 +944,16 @@ function endReadable(stream) { }); } } + +function forEach (xs, f) { + for (var i = 0, l = xs.length; i < l; i++) { + f(xs[i], i); + } +} + +function indexOf (xs, x) { + for (var i = 0, l = xs.length; i < l; i++) { + if (xs[i] === x) return i; + } + return -1; +} diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_transform.js b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_transform.js index 707e4b3bf0..eb188df3e8 100644 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_transform.js +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_transform.js @@ -65,7 +65,12 @@ module.exports = Transform; var Duplex = require('./_stream_duplex'); -var util = require('util'); + +/*<replacement>*/ +var util = require('core-util-is'); +util.inherits = require('inherits'); +/*</replacement>*/ + util.inherits(Transform, Duplex); diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_writable.js b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_writable.js index 4b976a9f3f..d0254d5a71 100644 --- a/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_writable.js +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/lib/_stream_writable.js @@ -24,10 +24,20 @@ // the drain event emission and buffering. module.exports = Writable; + +/*<replacement>*/ +var Buffer = require('buffer').Buffer; +/*</replacement>*/ + Writable.WritableState = WritableState; -var util = require('util'); -var assert = require('assert'); + +/*<replacement>*/ +var util = require('core-util-is'); +util.inherits = require('inherits'); +/*</replacement>*/ + + var Stream = require('stream'); util.inherits(Writable, Stream); @@ -104,12 +114,17 @@ function WritableState(options, stream) { this.writelen = 0; this.buffer = []; + + // True if the error was already emitted and should not be thrown again + this.errorEmitted = false; } function Writable(options) { + var Duplex = require('./_stream_duplex'); + // Writable ctor is applied to Duplexes, though they're not // instanceof Writable, they're instanceof Readable. - if (!(this instanceof Writable) && !(this instanceof require('./_stream_duplex'))) + if (!(this instanceof Writable) && !(this instanceof Duplex)) return new Writable(options); this._writableState = new WritableState(options, this); @@ -203,7 +218,9 @@ function writeOrBuffer(stream, state, chunk, encoding, cb) { state.length += len; var ret = state.length < state.highWaterMark; - state.needDrain = !ret; + // we must ensure that previous needDrain will not be reset to false. + if (!ret) + state.needDrain = true; if (state.writing) state.buffer.push(new WriteReq(chunk, encoding, cb)); @@ -230,6 +247,7 @@ function onwriteError(stream, state, sync, er, cb) { else cb(er); + stream._writableState.errorEmitted = true; stream.emit('error', er); } diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/README.md b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/README.md new file mode 100644 index 0000000000..5a76b4149c --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/README.md @@ -0,0 +1,3 @@ +# core-util-is + +The `util.is*` functions introduced in Node v0.12. diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/float.patch b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/float.patch new file mode 100644 index 0000000000..a06d5c05f7 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/float.patch @@ -0,0 +1,604 @@ +diff --git a/lib/util.js b/lib/util.js +index a03e874..9074e8e 100644 +--- a/lib/util.js ++++ b/lib/util.js +@@ -19,430 +19,6 @@ + // OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE + // USE OR OTHER DEALINGS IN THE SOFTWARE. + +-var formatRegExp = /%[sdj%]/g; +-exports.format = function(f) { +- if (!isString(f)) { +- var objects = []; +- for (var i = 0; i < arguments.length; i++) { +- objects.push(inspect(arguments[i])); +- } +- return objects.join(' '); +- } +- +- var i = 1; +- var args = arguments; +- var len = args.length; +- var str = String(f).replace(formatRegExp, function(x) { +- if (x === '%%') return '%'; +- if (i >= len) return x; +- switch (x) { +- case '%s': return String(args[i++]); +- case '%d': return Number(args[i++]); +- case '%j': +- try { +- return JSON.stringify(args[i++]); +- } catch (_) { +- return '[Circular]'; +- } +- default: +- return x; +- } +- }); +- for (var x = args[i]; i < len; x = args[++i]) { +- if (isNull(x) || !isObject(x)) { +- str += ' ' + x; +- } else { +- str += ' ' + inspect(x); +- } +- } +- return str; +-}; +- +- +-// Mark that a method should not be used. +-// Returns a modified function which warns once by default. +-// If --no-deprecation is set, then it is a no-op. +-exports.deprecate = function(fn, msg) { +- // Allow for deprecating things in the process of starting up. +- if (isUndefined(global.process)) { +- return function() { +- return exports.deprecate(fn, msg).apply(this, arguments); +- }; +- } +- +- if (process.noDeprecation === true) { +- return fn; +- } +- +- var warned = false; +- function deprecated() { +- if (!warned) { +- if (process.throwDeprecation) { +- throw new Error(msg); +- } else if (process.traceDeprecation) { +- console.trace(msg); +- } else { +- console.error(msg); +- } +- warned = true; +- } +- return fn.apply(this, arguments); +- } +- +- return deprecated; +-}; +- +- +-var debugs = {}; +-var debugEnviron; +-exports.debuglog = function(set) { +- if (isUndefined(debugEnviron)) +- debugEnviron = process.env.NODE_DEBUG || ''; +- set = set.toUpperCase(); +- if (!debugs[set]) { +- if (new RegExp('\\b' + set + '\\b', 'i').test(debugEnviron)) { +- var pid = process.pid; +- debugs[set] = function() { +- var msg = exports.format.apply(exports, arguments); +- console.error('%s %d: %s', set, pid, msg); +- }; +- } else { +- debugs[set] = function() {}; +- } +- } +- return debugs[set]; +-}; +- +- +-/** +- * Echos the value of a value. Trys to print the value out +- * in the best way possible given the different types. +- * +- * @param {Object} obj The object to print out. +- * @param {Object} opts Optional options object that alters the output. +- */ +-/* legacy: obj, showHidden, depth, colors*/ +-function inspect(obj, opts) { +- // default options +- var ctx = { +- seen: [], +- stylize: stylizeNoColor +- }; +- // legacy... +- if (arguments.length >= 3) ctx.depth = arguments[2]; +- if (arguments.length >= 4) ctx.colors = arguments[3]; +- if (isBoolean(opts)) { +- // legacy... +- ctx.showHidden = opts; +- } else if (opts) { +- // got an "options" object +- exports._extend(ctx, opts); +- } +- // set default options +- if (isUndefined(ctx.showHidden)) ctx.showHidden = false; +- if (isUndefined(ctx.depth)) ctx.depth = 2; +- if (isUndefined(ctx.colors)) ctx.colors = false; +- if (isUndefined(ctx.customInspect)) ctx.customInspect = true; +- if (ctx.colors) ctx.stylize = stylizeWithColor; +- return formatValue(ctx, obj, ctx.depth); +-} +-exports.inspect = inspect; +- +- +-// http://en.wikipedia.org/wiki/ANSI_escape_code#graphics +-inspect.colors = { +- 'bold' : [1, 22], +- 'italic' : [3, 23], +- 'underline' : [4, 24], +- 'inverse' : [7, 27], +- 'white' : [37, 39], +- 'grey' : [90, 39], +- 'black' : [30, 39], +- 'blue' : [34, 39], +- 'cyan' : [36, 39], +- 'green' : [32, 39], +- 'magenta' : [35, 39], +- 'red' : [31, 39], +- 'yellow' : [33, 39] +-}; +- +-// Don't use 'blue' not visible on cmd.exe +-inspect.styles = { +- 'special': 'cyan', +- 'number': 'yellow', +- 'boolean': 'yellow', +- 'undefined': 'grey', +- 'null': 'bold', +- 'string': 'green', +- 'date': 'magenta', +- // "name": intentionally not styling +- 'regexp': 'red' +-}; +- +- +-function stylizeWithColor(str, styleType) { +- var style = inspect.styles[styleType]; +- +- if (style) { +- return '\u001b[' + inspect.colors[style][0] + 'm' + str + +- '\u001b[' + inspect.colors[style][1] + 'm'; +- } else { +- return str; +- } +-} +- +- +-function stylizeNoColor(str, styleType) { +- return str; +-} +- +- +-function arrayToHash(array) { +- var hash = {}; +- +- array.forEach(function(val, idx) { +- hash[val] = true; +- }); +- +- return hash; +-} +- +- +-function formatValue(ctx, value, recurseTimes) { +- // Provide a hook for user-specified inspect functions. +- // Check that value is an object with an inspect function on it +- if (ctx.customInspect && +- value && +- isFunction(value.inspect) && +- // Filter out the util module, it's inspect function is special +- value.inspect !== exports.inspect && +- // Also filter out any prototype objects using the circular check. +- !(value.constructor && value.constructor.prototype === value)) { +- var ret = value.inspect(recurseTimes, ctx); +- if (!isString(ret)) { +- ret = formatValue(ctx, ret, recurseTimes); +- } +- return ret; +- } +- +- // Primitive types cannot have properties +- var primitive = formatPrimitive(ctx, value); +- if (primitive) { +- return primitive; +- } +- +- // Look up the keys of the object. +- var keys = Object.keys(value); +- var visibleKeys = arrayToHash(keys); +- +- if (ctx.showHidden) { +- keys = Object.getOwnPropertyNames(value); +- } +- +- // Some type of object without properties can be shortcutted. +- if (keys.length === 0) { +- if (isFunction(value)) { +- var name = value.name ? ': ' + value.name : ''; +- return ctx.stylize('[Function' + name + ']', 'special'); +- } +- if (isRegExp(value)) { +- return ctx.stylize(RegExp.prototype.toString.call(value), 'regexp'); +- } +- if (isDate(value)) { +- return ctx.stylize(Date.prototype.toString.call(value), 'date'); +- } +- if (isError(value)) { +- return formatError(value); +- } +- } +- +- var base = '', array = false, braces = ['{', '}']; +- +- // Make Array say that they are Array +- if (isArray(value)) { +- array = true; +- braces = ['[', ']']; +- } +- +- // Make functions say that they are functions +- if (isFunction(value)) { +- var n = value.name ? ': ' + value.name : ''; +- base = ' [Function' + n + ']'; +- } +- +- // Make RegExps say that they are RegExps +- if (isRegExp(value)) { +- base = ' ' + RegExp.prototype.toString.call(value); +- } +- +- // Make dates with properties first say the date +- if (isDate(value)) { +- base = ' ' + Date.prototype.toUTCString.call(value); +- } +- +- // Make error with message first say the error +- if (isError(value)) { +- base = ' ' + formatError(value); +- } +- +- if (keys.length === 0 && (!array || value.length == 0)) { +- return braces[0] + base + braces[1]; +- } +- +- if (recurseTimes < 0) { +- if (isRegExp(value)) { +- return ctx.stylize(RegExp.prototype.toString.call(value), 'regexp'); +- } else { +- return ctx.stylize('[Object]', 'special'); +- } +- } +- +- ctx.seen.push(value); +- +- var output; +- if (array) { +- output = formatArray(ctx, value, recurseTimes, visibleKeys, keys); +- } else { +- output = keys.map(function(key) { +- return formatProperty(ctx, value, recurseTimes, visibleKeys, key, array); +- }); +- } +- +- ctx.seen.pop(); +- +- return reduceToSingleString(output, base, braces); +-} +- +- +-function formatPrimitive(ctx, value) { +- if (isUndefined(value)) +- return ctx.stylize('undefined', 'undefined'); +- if (isString(value)) { +- var simple = '\'' + JSON.stringify(value).replace(/^"|"$/g, '') +- .replace(/'/g, "\\'") +- .replace(/\\"/g, '"') + '\''; +- return ctx.stylize(simple, 'string'); +- } +- if (isNumber(value)) { +- // Format -0 as '-0'. Strict equality won't distinguish 0 from -0, +- // so instead we use the fact that 1 / -0 < 0 whereas 1 / 0 > 0 . +- if (value === 0 && 1 / value < 0) +- return ctx.stylize('-0', 'number'); +- return ctx.stylize('' + value, 'number'); +- } +- if (isBoolean(value)) +- return ctx.stylize('' + value, 'boolean'); +- // For some reason typeof null is "object", so special case here. +- if (isNull(value)) +- return ctx.stylize('null', 'null'); +-} +- +- +-function formatError(value) { +- return '[' + Error.prototype.toString.call(value) + ']'; +-} +- +- +-function formatArray(ctx, value, recurseTimes, visibleKeys, keys) { +- var output = []; +- for (var i = 0, l = value.length; i < l; ++i) { +- if (hasOwnProperty(value, String(i))) { +- output.push(formatProperty(ctx, value, recurseTimes, visibleKeys, +- String(i), true)); +- } else { +- output.push(''); +- } +- } +- keys.forEach(function(key) { +- if (!key.match(/^\d+$/)) { +- output.push(formatProperty(ctx, value, recurseTimes, visibleKeys, +- key, true)); +- } +- }); +- return output; +-} +- +- +-function formatProperty(ctx, value, recurseTimes, visibleKeys, key, array) { +- var name, str, desc; +- desc = Object.getOwnPropertyDescriptor(value, key) || { value: value[key] }; +- if (desc.get) { +- if (desc.set) { +- str = ctx.stylize('[Getter/Setter]', 'special'); +- } else { +- str = ctx.stylize('[Getter]', 'special'); +- } +- } else { +- if (desc.set) { +- str = ctx.stylize('[Setter]', 'special'); +- } +- } +- if (!hasOwnProperty(visibleKeys, key)) { +- name = '[' + key + ']'; +- } +- if (!str) { +- if (ctx.seen.indexOf(desc.value) < 0) { +- if (isNull(recurseTimes)) { +- str = formatValue(ctx, desc.value, null); +- } else { +- str = formatValue(ctx, desc.value, recurseTimes - 1); +- } +- if (str.indexOf('\n') > -1) { +- if (array) { +- str = str.split('\n').map(function(line) { +- return ' ' + line; +- }).join('\n').substr(2); +- } else { +- str = '\n' + str.split('\n').map(function(line) { +- return ' ' + line; +- }).join('\n'); +- } +- } +- } else { +- str = ctx.stylize('[Circular]', 'special'); +- } +- } +- if (isUndefined(name)) { +- if (array && key.match(/^\d+$/)) { +- return str; +- } +- name = JSON.stringify('' + key); +- if (name.match(/^"([a-zA-Z_][a-zA-Z_0-9]*)"$/)) { +- name = name.substr(1, name.length - 2); +- name = ctx.stylize(name, 'name'); +- } else { +- name = name.replace(/'/g, "\\'") +- .replace(/\\"/g, '"') +- .replace(/(^"|"$)/g, "'"); +- name = ctx.stylize(name, 'string'); +- } +- } +- +- return name + ': ' + str; +-} +- +- +-function reduceToSingleString(output, base, braces) { +- var numLinesEst = 0; +- var length = output.reduce(function(prev, cur) { +- numLinesEst++; +- if (cur.indexOf('\n') >= 0) numLinesEst++; +- return prev + cur.replace(/\u001b\[\d\d?m/g, '').length + 1; +- }, 0); +- +- if (length > 60) { +- return braces[0] + +- (base === '' ? '' : base + '\n ') + +- ' ' + +- output.join(',\n ') + +- ' ' + +- braces[1]; +- } +- +- return braces[0] + base + ' ' + output.join(', ') + ' ' + braces[1]; +-} +- +- + // NOTE: These type checking functions intentionally don't use `instanceof` + // because it is fragile and can be easily faked with `Object.create()`. + function isArray(ar) { +@@ -522,166 +98,10 @@ function isPrimitive(arg) { + exports.isPrimitive = isPrimitive; + + function isBuffer(arg) { +- return arg instanceof Buffer; ++ return Buffer.isBuffer(arg); + } + exports.isBuffer = isBuffer; + + function objectToString(o) { + return Object.prototype.toString.call(o); +-} +- +- +-function pad(n) { +- return n < 10 ? '0' + n.toString(10) : n.toString(10); +-} +- +- +-var months = ['Jan', 'Feb', 'Mar', 'Apr', 'May', 'Jun', 'Jul', 'Aug', 'Sep', +- 'Oct', 'Nov', 'Dec']; +- +-// 26 Feb 16:19:34 +-function timestamp() { +- var d = new Date(); +- var time = [pad(d.getHours()), +- pad(d.getMinutes()), +- pad(d.getSeconds())].join(':'); +- return [d.getDate(), months[d.getMonth()], time].join(' '); +-} +- +- +-// log is just a thin wrapper to console.log that prepends a timestamp +-exports.log = function() { +- console.log('%s - %s', timestamp(), exports.format.apply(exports, arguments)); +-}; +- +- +-/** +- * Inherit the prototype methods from one constructor into another. +- * +- * The Function.prototype.inherits from lang.js rewritten as a standalone +- * function (not on Function.prototype). NOTE: If this file is to be loaded +- * during bootstrapping this function needs to be rewritten using some native +- * functions as prototype setup using normal JavaScript does not work as +- * expected during bootstrapping (see mirror.js in r114903). +- * +- * @param {function} ctor Constructor function which needs to inherit the +- * prototype. +- * @param {function} superCtor Constructor function to inherit prototype from. +- */ +-exports.inherits = function(ctor, superCtor) { +- ctor.super_ = superCtor; +- ctor.prototype = Object.create(superCtor.prototype, { +- constructor: { +- value: ctor, +- enumerable: false, +- writable: true, +- configurable: true +- } +- }); +-}; +- +-exports._extend = function(origin, add) { +- // Don't do anything if add isn't an object +- if (!add || !isObject(add)) return origin; +- +- var keys = Object.keys(add); +- var i = keys.length; +- while (i--) { +- origin[keys[i]] = add[keys[i]]; +- } +- return origin; +-}; +- +-function hasOwnProperty(obj, prop) { +- return Object.prototype.hasOwnProperty.call(obj, prop); +-} +- +- +-// Deprecated old stuff. +- +-exports.p = exports.deprecate(function() { +- for (var i = 0, len = arguments.length; i < len; ++i) { +- console.error(exports.inspect(arguments[i])); +- } +-}, 'util.p: Use console.error() instead'); +- +- +-exports.exec = exports.deprecate(function() { +- return require('child_process').exec.apply(this, arguments); +-}, 'util.exec is now called `child_process.exec`.'); +- +- +-exports.print = exports.deprecate(function() { +- for (var i = 0, len = arguments.length; i < len; ++i) { +- process.stdout.write(String(arguments[i])); +- } +-}, 'util.print: Use console.log instead'); +- +- +-exports.puts = exports.deprecate(function() { +- for (var i = 0, len = arguments.length; i < len; ++i) { +- process.stdout.write(arguments[i] + '\n'); +- } +-}, 'util.puts: Use console.log instead'); +- +- +-exports.debug = exports.deprecate(function(x) { +- process.stderr.write('DEBUG: ' + x + '\n'); +-}, 'util.debug: Use console.error instead'); +- +- +-exports.error = exports.deprecate(function(x) { +- for (var i = 0, len = arguments.length; i < len; ++i) { +- process.stderr.write(arguments[i] + '\n'); +- } +-}, 'util.error: Use console.error instead'); +- +- +-exports.pump = exports.deprecate(function(readStream, writeStream, callback) { +- var callbackCalled = false; +- +- function call(a, b, c) { +- if (callback && !callbackCalled) { +- callback(a, b, c); +- callbackCalled = true; +- } +- } +- +- readStream.addListener('data', function(chunk) { +- if (writeStream.write(chunk) === false) readStream.pause(); +- }); +- +- writeStream.addListener('drain', function() { +- readStream.resume(); +- }); +- +- readStream.addListener('end', function() { +- writeStream.end(); +- }); +- +- readStream.addListener('close', function() { +- call(); +- }); +- +- readStream.addListener('error', function(err) { +- writeStream.end(); +- call(err); +- }); +- +- writeStream.addListener('error', function(err) { +- readStream.destroy(); +- call(err); +- }); +-}, 'util.pump(): Use readableStream.pipe() instead'); +- +- +-var uv; +-exports._errnoException = function(err, syscall) { +- if (isUndefined(uv)) uv = process.binding('uv'); +- var errname = uv.errname(err); +- var e = new Error(syscall + ' ' + errname); +- e.code = errname; +- e.errno = errname; +- e.syscall = syscall; +- return e; +-}; ++}

\ No newline at end of file diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/lib/util.js b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/lib/util.js new file mode 100644 index 0000000000..9074e8ebcb --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/lib/util.js @@ -0,0 +1,107 @@ +// Copyright Joyent, Inc. and other Node contributors. +// +// Permission is hereby granted, free of charge, to any person obtaining a +// copy of this software and associated documentation files (the +// "Software"), to deal in the Software without restriction, including +// without limitation the rights to use, copy, modify, merge, publish, +// distribute, sublicense, and/or sell copies of the Software, and to permit +// persons to whom the Software is furnished to do so, subject to the +// following conditions: +// +// The above copyright notice and this permission notice shall be included +// in all copies or substantial portions of the Software. +// +// THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS +// OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF +// MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN +// NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, +// DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR +// OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE +// USE OR OTHER DEALINGS IN THE SOFTWARE. + +// NOTE: These type checking functions intentionally don't use `instanceof` +// because it is fragile and can be easily faked with `Object.create()`. +function isArray(ar) { + return Array.isArray(ar); +} +exports.isArray = isArray; + +function isBoolean(arg) { + return typeof arg === 'boolean'; +} +exports.isBoolean = isBoolean; + +function isNull(arg) { + return arg === null; +} +exports.isNull = isNull; + +function isNullOrUndefined(arg) { + return arg == null; +} +exports.isNullOrUndefined = isNullOrUndefined; + +function isNumber(arg) { + return typeof arg === 'number'; +} +exports.isNumber = isNumber; + +function isString(arg) { + return typeof arg === 'string'; +} +exports.isString = isString; + +function isSymbol(arg) { + return typeof arg === 'symbol'; +} +exports.isSymbol = isSymbol; + +function isUndefined(arg) { + return arg === void 0; +} +exports.isUndefined = isUndefined; + +function isRegExp(re) { + return isObject(re) && objectToString(re) === '[object RegExp]'; +} +exports.isRegExp = isRegExp; + +function isObject(arg) { + return typeof arg === 'object' && arg !== null; +} +exports.isObject = isObject; + +function isDate(d) { + return isObject(d) && objectToString(d) === '[object Date]'; +} +exports.isDate = isDate; + +function isError(e) { + return isObject(e) && + (objectToString(e) === '[object Error]' || e instanceof Error); +} +exports.isError = isError; + +function isFunction(arg) { + return typeof arg === 'function'; +} +exports.isFunction = isFunction; + +function isPrimitive(arg) { + return arg === null || + typeof arg === 'boolean' || + typeof arg === 'number' || + typeof arg === 'string' || + typeof arg === 'symbol' || // ES6 symbol + typeof arg === 'undefined'; +} +exports.isPrimitive = isPrimitive; + +function isBuffer(arg) { + return Buffer.isBuffer(arg); +} +exports.isBuffer = isBuffer; + +function objectToString(o) { + return Object.prototype.toString.call(o); +}

\ No newline at end of file diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/package.json b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/package.json new file mode 100644 index 0000000000..2b7593c193 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/package.json @@ -0,0 +1,54 @@ +{ + "name": "core-util-is", + "version": "1.0.1", + "description": "The `util.is*` functions introduced in Node v0.12.", + "main": "lib/util.js", + "repository": { + "type": "git", + "url": "git://github.com/isaacs/core-util-is" + }, + "keywords": [ + "util", + "isBuffer", + "isArray", + "isNumber", + "isString", + "isRegExp", + "isThis", + "isThat", + "polyfill" + ], + "author": { + "name": "Isaac Z. Schlueter", + "email": "i@izs.me", + "url": "http://blog.izs.me/" + }, + "license": "MIT", + "bugs": { + "url": "https://github.com/isaacs/core-util-is/issues" + }, + "readme": "# core-util-is\n\nThe `util.is*` functions introduced in Node v0.12.\n", + "readmeFilename": "README.md", + "homepage": "https://github.com/isaacs/core-util-is", + "_id": "core-util-is@1.0.1", + "dist": { + "shasum": "6b07085aef9a3ccac6ee53bf9d3df0c1521a5538", + "tarball": "http://registry.npmjs.org/core-util-is/-/core-util-is-1.0.1.tgz" + }, + "_from": "core-util-is@~1.0.0", + "_npmVersion": "1.3.23", + "_npmUser": { + "name": "isaacs", + "email": "i@izs.me" + }, + "maintainers": [ + { + "name": "isaacs", + "email": "i@izs.me" + } + ], + "directories": {}, + "_shasum": "6b07085aef9a3ccac6ee53bf9d3df0c1521a5538", + "_resolved": "https://registry.npmjs.org/core-util-is/-/core-util-is-1.0.1.tgz", + "scripts": {} +} diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/util.js b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/util.js new file mode 100644 index 0000000000..007fa10575 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/core-util-is/util.js @@ -0,0 +1,106 @@ +// Copyright Joyent, Inc. and other Node contributors. +// +// Permission is hereby granted, free of charge, to any person obtaining a +// copy of this software and associated documentation files (the +// "Software"), to deal in the Software without restriction, including +// without limitation the rights to use, copy, modify, merge, publish, +// distribute, sublicense, and/or sell copies of the Software, and to permit +// persons to whom the Software is furnished to do so, subject to the +// following conditions: +// +// The above copyright notice and this permission notice shall be included +// in all copies or substantial portions of the Software. +// +// THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS +// OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF +// MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN +// NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, +// DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR +// OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE +// USE OR OTHER DEALINGS IN THE SOFTWARE. + +// NOTE: These type checking functions intentionally don't use `instanceof` +// because it is fragile and can be easily faked with `Object.create()`. +function isArray(ar) { + return Array.isArray(ar); +} +exports.isArray = isArray; + +function isBoolean(arg) { + return typeof arg === 'boolean'; +} +exports.isBoolean = isBoolean; + +function isNull(arg) { + return arg === null; +} +exports.isNull = isNull; + +function isNullOrUndefined(arg) { + return arg == null; +} +exports.isNullOrUndefined = isNullOrUndefined; + +function isNumber(arg) { + return typeof arg === 'number'; +} +exports.isNumber = isNumber; + +function isString(arg) { + return typeof arg === 'string'; +} +exports.isString = isString; + +function isSymbol(arg) { + return typeof arg === 'symbol'; +} +exports.isSymbol = isSymbol; + +function isUndefined(arg) { + return arg === void 0; +} +exports.isUndefined = isUndefined; + +function isRegExp(re) { + return isObject(re) && objectToString(re) === '[object RegExp]'; +} +exports.isRegExp = isRegExp; + +function isObject(arg) { + return typeof arg === 'object' && arg !== null; +} +exports.isObject = isObject; + +function isDate(d) { + return isObject(d) && objectToString(d) === '[object Date]'; +} +exports.isDate = isDate; + +function isError(e) { + return isObject(e) && objectToString(e) === '[object Error]'; +} +exports.isError = isError; + +function isFunction(arg) { + return typeof arg === 'function'; +} +exports.isFunction = isFunction; + +function isPrimitive(arg) { + return arg === null || + typeof arg === 'boolean' || + typeof arg === 'number' || + typeof arg === 'string' || + typeof arg === 'symbol' || // ES6 symbol + typeof arg === 'undefined'; +} +exports.isPrimitive = isPrimitive; + +function isBuffer(arg) { + return arg instanceof Buffer; +} +exports.isBuffer = isBuffer; + +function objectToString(o) { + return Object.prototype.toString.call(o); +} diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/README.md b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/README.md new file mode 100644 index 0000000000..052a62b8d7 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/README.md @@ -0,0 +1,54 @@ + +# isarray + +`Array#isArray` for older browsers. + +## Usage + +```js +var isArray = require('isarray'); + +console.log(isArray([])); // => true +console.log(isArray({})); // => false +``` + +## Installation + +With [npm](http://npmjs.org) do + +```bash +$ npm install isarray +``` + +Then bundle for the browser with +[browserify](https://github.com/substack/browserify). + +With [component](http://component.io) do + +```bash +$ component install juliangruber/isarray +``` + +## License + +(MIT) + +Copyright (c) 2013 Julian Gruber <julian@juliangruber.com> + +Permission is hereby granted, free of charge, to any person obtaining a copy of +this software and associated documentation files (the "Software"), to deal in +the Software without restriction, including without limitation the rights to +use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies +of the Software, and to permit persons to whom the Software is furnished to do +so, subject to the following conditions: + +The above copyright notice and this permission notice shall be included in all +copies or substantial portions of the Software. + +THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR +IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, +FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE +AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER +LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, +OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE +SOFTWARE. diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/build/build.js b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/build/build.js new file mode 100644 index 0000000000..ec58596aee --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/build/build.js @@ -0,0 +1,209 @@ + +/** + * Require the given path. + * + * @param {String} path + * @return {Object} exports + * @api public + */ + +function require(path, parent, orig) { + var resolved = require.resolve(path); + + // lookup failed + if (null == resolved) { + orig = orig || path; + parent = parent || 'root'; + var err = new Error('Failed to require "' + orig + '" from "' + parent + '"'); + err.path = orig; + err.parent = parent; + err.require = true; + throw err; + } + + var module = require.modules[resolved]; + + // perform real require() + // by invoking the module's + // registered function + if (!module.exports) { + module.exports = {}; + module.client = module.component = true; + module.call(this, module.exports, require.relative(resolved), module); + } + + return module.exports; +} + +/** + * Registered modules. + */ + +require.modules = {}; + +/** + * Registered aliases. + */ + +require.aliases = {}; + +/** + * Resolve `path`. + * + * Lookup: + * + * - PATH/index.js + * - PATH.js + * - PATH + * + * @param {String} path + * @return {String} path or null + * @api private + */ + +require.resolve = function(path) { + if (path.charAt(0) === '/') path = path.slice(1); + var index = path + '/index.js'; + + var paths = [ + path, + path + '.js', + path + '.json', + path + '/index.js', + path + '/index.json' + ]; + + for (var i = 0; i < paths.length; i++) { + var path = paths[i]; + if (require.modules.hasOwnProperty(path)) return path; + } + + if (require.aliases.hasOwnProperty(index)) { + return require.aliases[index]; + } +}; + +/** + * Normalize `path` relative to the current path. + * + * @param {String} curr + * @param {String} path + * @return {String} + * @api private + */ + +require.normalize = function(curr, path) { + var segs = []; + + if ('.' != path.charAt(0)) return path; + + curr = curr.split('/'); + path = path.split('/'); + + for (var i = 0; i < path.length; ++i) { + if ('..' == path[i]) { + curr.pop(); + } else if ('.' != path[i] && '' != path[i]) { + segs.push(path[i]); + } + } + + return curr.concat(segs).join('/'); +}; + +/** + * Register module at `path` with callback `definition`. + * + * @param {String} path + * @param {Function} definition + * @api private + */ + +require.register = function(path, definition) { + require.modules[path] = definition; +}; + +/** + * Alias a module definition. + * + * @param {String} from + * @param {String} to + * @api private + */ + +require.alias = function(from, to) { + if (!require.modules.hasOwnProperty(from)) { + throw new Error('Failed to alias "' + from + '", it does not exist'); + } + require.aliases[to] = from; +}; + +/** + * Return a require function relative to the `parent` path. + * + * @param {String} parent + * @return {Function} + * @api private + */ + +require.relative = function(parent) { + var p = require.normalize(parent, '..'); + + /** + * lastIndexOf helper. + */ + + function lastIndexOf(arr, obj) { + var i = arr.length; + while (i--) { + if (arr[i] === obj) return i; + } + return -1; + } + + /** + * The relative require() itself. + */ + + function localRequire(path) { + var resolved = localRequire.resolve(path); + return require(resolved, parent, path); + } + + /** + * Resolve relative to the parent. + */ + + localRequire.resolve = function(path) { + var c = path.charAt(0); + if ('/' == c) return path.slice(1); + if ('.' == c) return require.normalize(p, path); + + // resolve deps by returning + // the dep in the nearest "deps" + // directory + var segs = parent.split('/'); + var i = lastIndexOf(segs, 'deps') + 1; + if (!i) i = 0; + path = segs.slice(0, i + 1).join('/') + '/deps/' + path; + return path; + }; + + /** + * Check if module is defined at `path`. + */ + + localRequire.exists = function(path) { + return require.modules.hasOwnProperty(localRequire.resolve(path)); + }; + + return localRequire; +}; +require.register("isarray/index.js", function(exports, require, module){ +module.exports = Array.isArray || function (arr) { + return Object.prototype.toString.call(arr) == '[object Array]'; +}; + +}); +require.alias("isarray/index.js", "isarray/index.js"); + diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/component.json b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/component.json new file mode 100644 index 0000000000..9e31b68388 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/component.json @@ -0,0 +1,19 @@ +{ + "name" : "isarray", + "description" : "Array#isArray for older browsers", + "version" : "0.0.1", + "repository" : "juliangruber/isarray", + "homepage": "https://github.com/juliangruber/isarray", + "main" : "index.js", + "scripts" : [ + "index.js" + ], + "dependencies" : {}, + "keywords": ["browser","isarray","array"], + "author": { + "name": "Julian Gruber", + "email": "mail@juliangruber.com", + "url": "http://juliangruber.com" + }, + "license": "MIT" +} diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/index.js b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/index.js new file mode 100644 index 0000000000..5f5ad45d46 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/index.js @@ -0,0 +1,3 @@ +module.exports = Array.isArray || function (arr) { + return Object.prototype.toString.call(arr) == '[object Array]'; +}; diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/package.json b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/package.json new file mode 100644 index 0000000000..fc7904b67b --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/isarray/package.json @@ -0,0 +1,54 @@ +{ + "name": "isarray", + "description": "Array#isArray for older browsers", + "version": "0.0.1", + "repository": { + "type": "git", + "url": "git://github.com/juliangruber/isarray.git" + }, + "homepage": "https://github.com/juliangruber/isarray", + "main": "index.js", + "scripts": { + "test": "tap test/*.js" + }, + "dependencies": {}, + "devDependencies": { + "tap": "*" + }, + "keywords": [ + "browser", + "isarray", + "array" + ], + "author": { + "name": "Julian Gruber", + "email": "mail@juliangruber.com", + "url": "http://juliangruber.com" + }, + "license": "MIT", + "readme": "\n# isarray\n\n`Array#isArray` for older browsers.\n\n## Usage\n\n```js\nvar isArray = require('isarray');\n\nconsole.log(isArray([])); // => true\nconsole.log(isArray({})); // => false\n```\n\n## Installation\n\nWith [npm](http://npmjs.org) do\n\n```bash\n$ npm install isarray\n```\n\nThen bundle for the browser with\n[browserify](https://github.com/substack/browserify).\n\nWith [component](http://component.io) do\n\n```bash\n$ component install juliangruber/isarray\n```\n\n## License\n\n(MIT)\n\nCopyright (c) 2013 Julian Gruber <julian@juliangruber.com>\n\nPermission is hereby granted, free of charge, to any person obtaining a copy of\nthis software and associated documentation files (the \"Software\"), to deal in\nthe Software without restriction, including without limitation the rights to\nuse, copy, modify, merge, publish, distribute, sublicense, and/or sell copies\nof the Software, and to permit persons to whom the Software is furnished to do\nso, subject to the following conditions:\n\nThe above copyright notice and this permission notice shall be included in all\ncopies or substantial portions of the Software.\n\nTHE SOFTWARE IS PROVIDED \"AS IS\", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR\nIMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,\nFITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE\nAUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER\nLIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,\nOUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE\nSOFTWARE.\n", + "readmeFilename": "README.md", + "_id": "isarray@0.0.1", + "dist": { + "shasum": "8a18acfca9a8f4177e09abfc6038939b05d1eedf", + "tarball": "http://registry.npmjs.org/isarray/-/isarray-0.0.1.tgz" + }, + "_from": "isarray@0.0.1", + "_npmVersion": "1.2.18", + "_npmUser": { + "name": "juliangruber", + "email": "julian@juliangruber.com" + }, + "maintainers": [ + { + "name": "juliangruber", + "email": "julian@juliangruber.com" + } + ], + "directories": {}, + "_shasum": "8a18acfca9a8f4177e09abfc6038939b05d1eedf", + "_resolved": "https://registry.npmjs.org/isarray/-/isarray-0.0.1.tgz", + "bugs": { + "url": "https://github.com/juliangruber/isarray/issues" + } +} diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/.npmignore b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/.npmignore new file mode 100644 index 0000000000..206320cc1d --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/.npmignore @@ -0,0 +1,2 @@ +build +test diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/LICENSE b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/LICENSE new file mode 100644 index 0000000000..6de584a48f --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/LICENSE @@ -0,0 +1,20 @@ +Copyright Joyent, Inc. and other Node contributors. + +Permission is hereby granted, free of charge, to any person obtaining a +copy of this software and associated documentation files (the +"Software"), to deal in the Software without restriction, including +without limitation the rights to use, copy, modify, merge, publish, +distribute, sublicense, and/or sell copies of the Software, and to permit +persons to whom the Software is furnished to do so, subject to the +following conditions: + +The above copyright notice and this permission notice shall be included +in all copies or substantial portions of the Software. + +THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS +OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF +MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN +NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, +DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR +OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE +USE OR OTHER DEALINGS IN THE SOFTWARE. diff --git a/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/README.md b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/README.md new file mode 100644 index 0000000000..4d2aa00150 --- /dev/null +++ b/deps/npm/node_modules/sha/node_modules/readable-stream/node_modules/string_decoder/README.md @@ -0,0 +1,7 @@ +**string_decoder.js** (`require('string_decoder')`) from Node.js core + +Copyright Joyent, Inc. and other Node contributors. See LICENCE file for details. + +Version numbers match the versions found in Node core, e.g. 0.10.24 matches Node 0.10.24, likewise 0.11.10 matches Node 0.11.10. **Prefer the stable version over the unstable.** + +The *build/* directory contains a build script that will scrape the source from the [joyent/node](https://github.com/joyent/node) repo given a specific Node version.