diff options

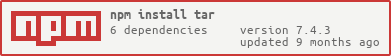

Diffstat (limited to 'deps/npm/node_modules/node-gyp/node_modules/tar')

38 files changed, 5656 insertions, 0 deletions